In the race to harness AI, enterprises once believed bigger meant better. Large Language Models (LLMs) promised scale, insights across departments, and automation that could transform operations. For you and your team, they offered the ability to analyze vast amounts of data and gain organization-wide visibility. Yet as adoption grew, their limitations became clear: slower responses during critical operations, rising infrastructure costs, and governance complexity often slowed decision-making. While LLMs delivered breadth, they didn’t always provide the speed, precision, or control modern teams truly need.

This gap created the need for Small Language Models (SLMs). Lean, domain-focused, and purpose-built, SLMs deliver millisecond responses, reduce operational costs by up to 60%, and provide tighter control over sensitive data. The results are tangible: Bayer achieved a +40% increase in predictive accuracy, and Gartner predicts SLM adoption will triple LLM usage by 2027. SLMs excel in areas where precision, speed, and compliance matter most such as predictive operations, knowledge management, and targeted automation, giving you the tools to act decisively and achieve measurable outcomes.

Understanding the difference between LLMs and SLMs is now a strategic imperative. LLMs provide breadth and enterprise-wide insight, while SLMs deliver targeted, agile performance where it matters most. The right combination allows you to deploy AI intelligently, maximize ROI, and ensure every initiative aligns with your KPIs, making AI not just powerful but effective for your business.

This blog is designed to help you cut through the noise around LLMs and SLMs, understand their business impact, and give you the clarity to make faster, smarter decisions and turn AI into a measurable strategic advantage.

What are SLMs and LLMs

In the evolving landscape of artificial intelligence, Small language models (SLMs) and Large language models (LLMs) represent two pivotal categories that shape how enterprises harness AI to drive innovation, efficiency, and competitive advantage. Though they share a foundational architecture rooted in transformers, their design philosophies, operational roles, and business implications differ significantly.

Understanding these distinctions is essential for technology leaders seeking to build scalable, resilient, and context-aware AI systems tailored to their organization's unique demands.

Small language models (SLMs): Task-tuned, operationally efficient AI

Small language models (SLMs) are compact AI systems designed to deliver fast, domain-focused intelligence with operational efficiency. Unlike LLMs, SLMs are trained on curated, domain-specific datasets, making them highly accurate for specialized tasks such as document summarization, sentiment analysis, coding assistance, or internal knowledge retrieval.

With fewer parameters, SLMs require less computational power and are capable of on-device or edge deployment, making them ideal for low-latency, real-time operations. They enable enterprises to run AI safely in privacy-sensitive environments, scale cost-effectively across thousands of endpoints, and maintain offline-ready capabilities in environments with limited connectivity.

SLMs are particularly valuable for task-specific workflows, such as automating forms, validating structured inputs, or delivering virtual assistant functionality in enterprise systems. Their smaller memory footprint, lower energy consumption, and fast inference speed allow businesses to embed AI into daily operations without the complexity or cost of LLMs.

Large language models (LLMs): Generalized, scalable intelligence

Large language models (LLMs) are advanced AI systems designed to understand, generate, and reason with human language across multiple domains. Trained on vast and diverse datasets including text, code, research publications, and proprietary enterprise data, LLMs can handle complex tasks, such as content summarization, predictive analytics, knowledge extraction, and sentiment analysis.

With billions to trillions of model parameters and the ability to perform few-shot learning, LLMs provide deep contextual understanding and support long-context reasoning. They require significant compute resources, often using multiple parallel processing units like GPUs or TPUs for training and inference, and are usually cloud-hosted to ensure scalability and high concurrency.

Enterprises leverage LLMs for enterprise-wide intelligence: enabling cross-departmental knowledge retrieval, multi-turn digital assistants, advanced semantic search, and multilingual support. While they are resource-intensive, their broad training data, large parameter scale, and emergent capabilities allow organizations to generate high-quality, human-like language outputs and derive insights at scale, making them essential for strategic decision-making, innovation, and complex enterprise workflows.

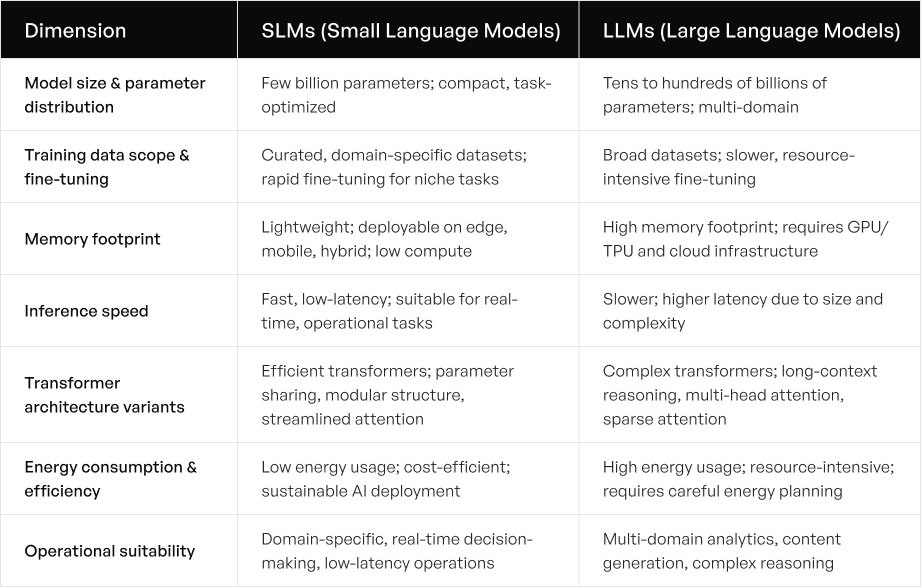

Architectural and design differences

Understanding the architectural and design differences between small language models (SLMs) and large language models (LLMs) is critical for enterprises seeking to deploy AI effectively. These distinctions determine model capabilities, computational requirements, and suitability for specific domain-specific tasks, helping organizations choose the right model for the right use case.

1. Model size and parameter distribution

Small language models (SLMs) typically contain a few billion parameters, making them compact and task-optimized. Their smaller size allows for fast inference, lower computational power requirements, and efficient operation in resource-constrained environments. SLMs excel in specific task domains like sentiment analysis, enterprise automation, and virtual assistants, where precision and operational efficiency are essential.

Large language models (LLMs), by contrast, feature tens to hundreds of billions of parameters, enabling them to generate human language, handle complex tasks, and emulate human intelligence across multiple domains. Their large model parameters support complex relationships, sophisticated data analysis, and deep contextual understanding, making them ideal for multi-domain applications.

2. Training data scope and fine-tuning

SLMs are trained on curated, domain-specific datasets, allowing rapid fine-tuning for specialized applications. This focused approach ensures high accuracy, reduces hallucinations, and supports domain-specific knowledge retention. SLMs are particularly useful in regulated industries like healthcare and finance, where proprietary data protection and data privacy are crucial.

LLMs are trained on broad training data, including text, code, and other large-scale sources. While this enables them to handle complex tasks across multiple domains, their resource-intensive training requires significant compute resources and multiple parallel processing units. Fine-tuning LLMs is slower and more expensive, but it enables general-purpose AI applications with vast datasets.

3. Memory footprint and computational requirements

SLMs have a lightweight memory footprint, allowing deployment on edge devices, mobile platforms, or hybrid infrastructures. Their fewer parameters and lower computational power requirements make them suitable for real-time AI applications without extensive infrastructure.

LLMs, due to their size, require GPU- or TPU-intensive environments, high memory, and often cloud-based infrastructure. They need significant computational power for both training and inference, making them ideal for enterprise-wide knowledge systems but less suited for low-latency, on-device tasks.

4. Inference speed and latency

SLMs deliver fast, low-latency inference, critical for applications such as virtual assistants, real-time customer support, and on-device AI. Their compact architecture ensures quick model response while maintaining accuracy in domain-specific tasks.

LLMs provide broad generalization and deep contextual understanding, but their large size and complex model architecture introduce latency. While excellent for sophisticated content generation or probabilistic machine learning, they may be less suitable for time-sensitive, operational decision-making scenarios.

5. Transformer architecture variants

SLMs leverage efficient transformer designs with parameter sharing, modular structures, and streamlined attention mechanisms. These innovations reduce resource-intensive computation while maintaining accuracy on specific domains, supporting energy-efficient AI deployment.

LLMs use complex transformer variants, enabling long-context reasoning, sparse attention mechanisms, and multi-head self-attention. These architectures support advanced model outputs across broad domains but require significant compute resources and high-end hardware.

6. Energy consumption and efficiency

SLMs are optimized for minimal energy usage, making them suitable for sustainable AI practices and deployment across resource-constrained environments. Their lower operational costs and efficient architecture enable enterprise-wide adoption without heavy infrastructure investment.

LLMs, in contrast, have a high energy footprint due to resource-intensive training and inference. While they deliver powerful AI capabilities, deploying LLMs requires substantial computational power, multiple GPUs, and careful energy management planning.

SLMs offer efficient, task-specific performance, real-time inference, and energy-efficient deployment, making them ideal for domain-specific and operationally constrained environments. LLMs deliver broad multi-domain capabilities, complex reasoning, and advanced data analysis, but require significant computational resources and cloud infrastructure.

By understanding these architectural and design differences, enterprises can strategically select the right AI model, balancing precision, speed, scalability, and efficiency for their unique operational requirements.

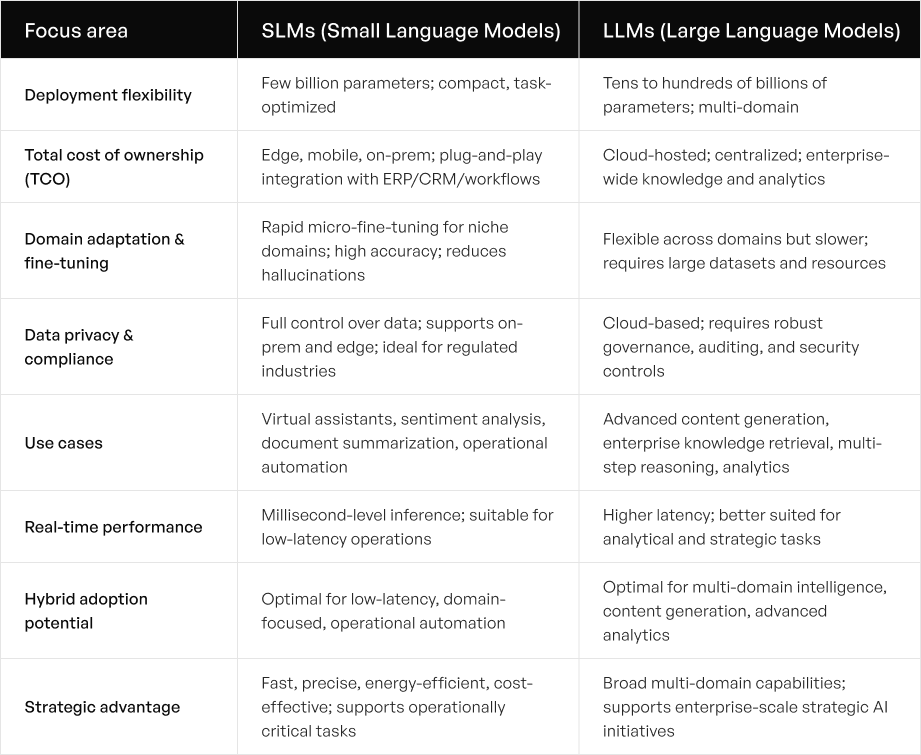

SLMs vs. LLMs: Key differences shaping enterprise adoption

As enterprises increasingly adopt AI, the choice between small language models (SLMs) and large language models (LLMs) is no longer just about size. It is a strategic decision that affects deployment, cost, security, and operational value. Understanding the unique strengths and limitations of each model type helps organizations make informed decisions that align with their business goals, domain-specific tasks, and infrastructure constraints.

Deployment flexibility

SLMs are designed for edge, mobile, and on-premises deployment, enabling low-latency, real-time performance. Their smaller model parameters and lighter memory footprint allow them to operate efficiently on consumer-grade hardware or resource-constrained environments, making them ideal for virtual assistants, real-time sentiment analysis, and operational automation.

LLMs, in contrast, are cloud-hosted and centralized, requiring high computational power and multiple parallel processing units. This makes them suited for enterprise-wide knowledge management, multi-domain content generation, and advanced analytics, where scale and broad training data support complex tasks and deep contextual understanding.

Total cost of ownership (TCO)

SLMs deliver a leaner cost structure, thanks to fewer parameters, smaller training datasets, and lower computational requirements. Enterprises can deploy SLMs widely without significant infrastructure investment, supporting real-time operations in privacy-sensitive domains such as healthcare and finance.

LLMs demand higher TCO, requiring extensive compute resources, large storage, and resource-intensive fine-tuning. While expensive, they provide emergent capabilities like generating human language, sophisticated data analysis, and handling complex relationships across multiple domains.

Fine-tuning and domain adaptation

SLMs excel at rapid fine-tuning using domain-specific datasets, making them highly effective for legal documents, clinical summaries, or enterprise knowledge retrieval. Their ability to adapt quickly to particular tasks ensures accuracy and reduces the risk of hallucinations that may occur in broader models.

LLMs can also be fine-tuned but require vast datasets, longer training times, and significant computational power. Their flexibility across domains allows enterprises to deploy LLMs for creative writing, in-depth content creation, and cross-domain knowledge tasks, albeit with a higher operational footprint.

Data privacy, security, and compliance

SLMs provide full control over data, supporting on-premises deployment and trusted edge environments. This ensures compliance with regulatory mandates and data sovereignty requirements, critical for industries like finance, healthcare, and defense.

LLMs, typically accessed via cloud APIs, introduce considerations around auditability, governance, and data jurisdiction. Enterprises must implement robust security and monitoring to mitigate risks when using LLMs for sensitive proprietary data or user prompt queries.

Use cases and operational value

SLMs are best suited for specific task domains, including:

- Virtual assistants for real-time support

- Document summarization for enterprise automation

- Sentiment analysis for customer and market insights

- Educational AI and AI tutoring

LLMs shine in multi-domain applications, enabling:

- Advanced content generation across industries

- Enterprise knowledge retrieval

- Sophisticated data analysis

- Complex, multi-step reasoning tasks

Real-time performance and responsiveness

SLMs offer millisecond-level inference, making them ideal for time-critical operations like factory automation, last-mile delivery, and clinical decision support. Their smaller model size and fewer parameters ensure quick model response even under high concurrency.

LLMs, although highly capable, may experience higher latency due to their large memory footprint, complex architecture, and resource-intensive inference. They are more suitable for analytical workflows, multi-step reasoning, and strategic decision-making rather than low-latency operations.

Hybrid adoption potential

Enterprises can maximize strategic AI value by combining SLMs and LLMs:

- SLMs for low-latency, domain-specific tasks, operational automation, and edge deployment

- LLMs for broad multi-domain intelligence, content generation, and advanced analytics

This hybrid strategy balances efficiency, scalability, and operational flexibility, enabling organizations to extract maximum value from AI while optimizing TCO, security, and domain relevance.

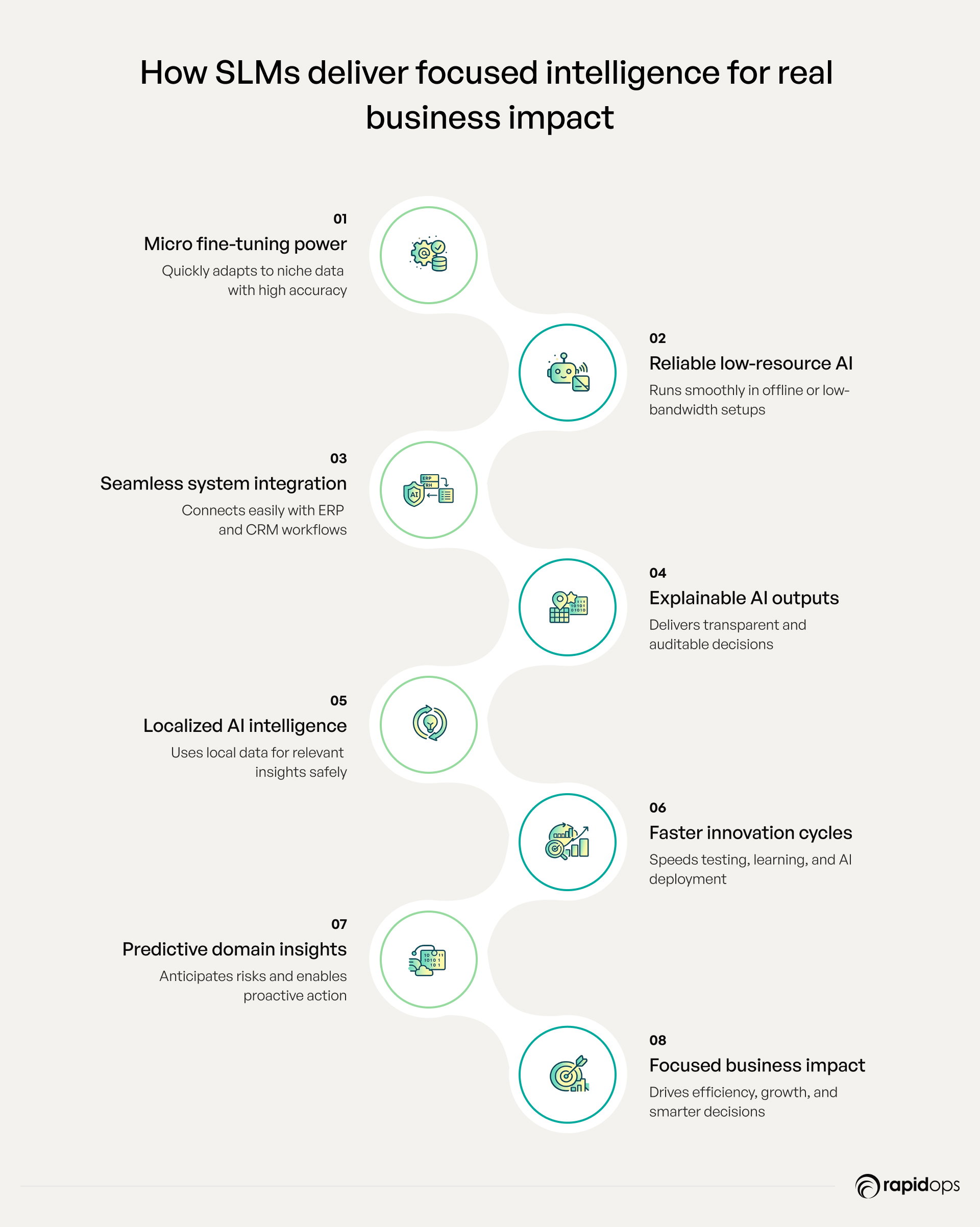

How SLMs drive fast, cost-efficient, and domain-focused AI for your business

Small Language Models (SLMs) are not just smaller alternatives to LLMs; they are strategic tools designed to create measurable business impact in ways many organizations overlook. By focusing on domain-specific intelligence, operational efficiency, and real-time adaptability, SLMs enable enterprises to extract actionable insights, optimize workflows, and innovate faster than competitors.

Rapid micro-fine-tuning for niche tasks

SLMs can be incrementally trained on proprietary or emerging datasets, enabling ultra-specific capabilities such as legal clause summarization, clinical protocol verification, or region-specific product recommendations. This micro-level adaptability ensures high accuracy and compliance without the cost or time overhead of retraining large models.

Operational continuity under constrained resources

SLMs perform reliably in offline, low-power, or limited-bandwidth environments, allowing businesses to maintain mission-critical operations across remote sites, field locations, or manufacturing floors. This capability ensures AI is always available where decisions matter most.

Plug-and-play enterprise integration

SLMs can integrate into existing ERP, CRM, and workflow systems without extensive re-engineering. By connecting AI directly to business processes, organizations can automate repetitive tasks, improve decision quality, and unlock hidden efficiencies across departments.

Deterministic and explainable outputs

SLMs produce transparent, traceable, and auditable outputs, enabling teams to verify AI-driven decisions instantly. This is critical for regulated industries such as finance, healthcare, and defense, where accountability and governance are non-negotiable.

Localized, hyper-personalized AI experiences

SLMs leverage local datasets to deliver highly personalized insights and recommendations tailored to specific teams, regions, or customers. This approach ensures relevance, drives engagement, and protects sensitive data by avoiding unnecessary exposure to central systems or cloud servers.

Rapid iteration and innovation cycles

SLMs enable teams to prototype, test, and deploy AI features quickly, reducing the time from idea to impact. This agility supports experimentation with new business models, process improvements, or customer experiences, giving organizations a first-mover advantage in competitive markets.

Predictive operational insights

By embedding domain-specific predictive models, SLMs help teams anticipate outcomes such as supply chain disruptions, customer churn, or maintenance requirements. This shifts operations from reactive to proactive, allowing data-driven strategic decisions in real time.

Unlike generic AI solutions, SLMs let you deploy intelligence where it matters most—fast, precise, and aligned with your operational realities. They provide high-impact, measurable outcomes, such as improving team productivity, enhancing customer experiences, accelerating decision-making, and reducing compliance risks. By leveraging SLMs strategically, you can achieve competitive advantage, operational resilience, and scalable AI adoption in 2026 and beyond.

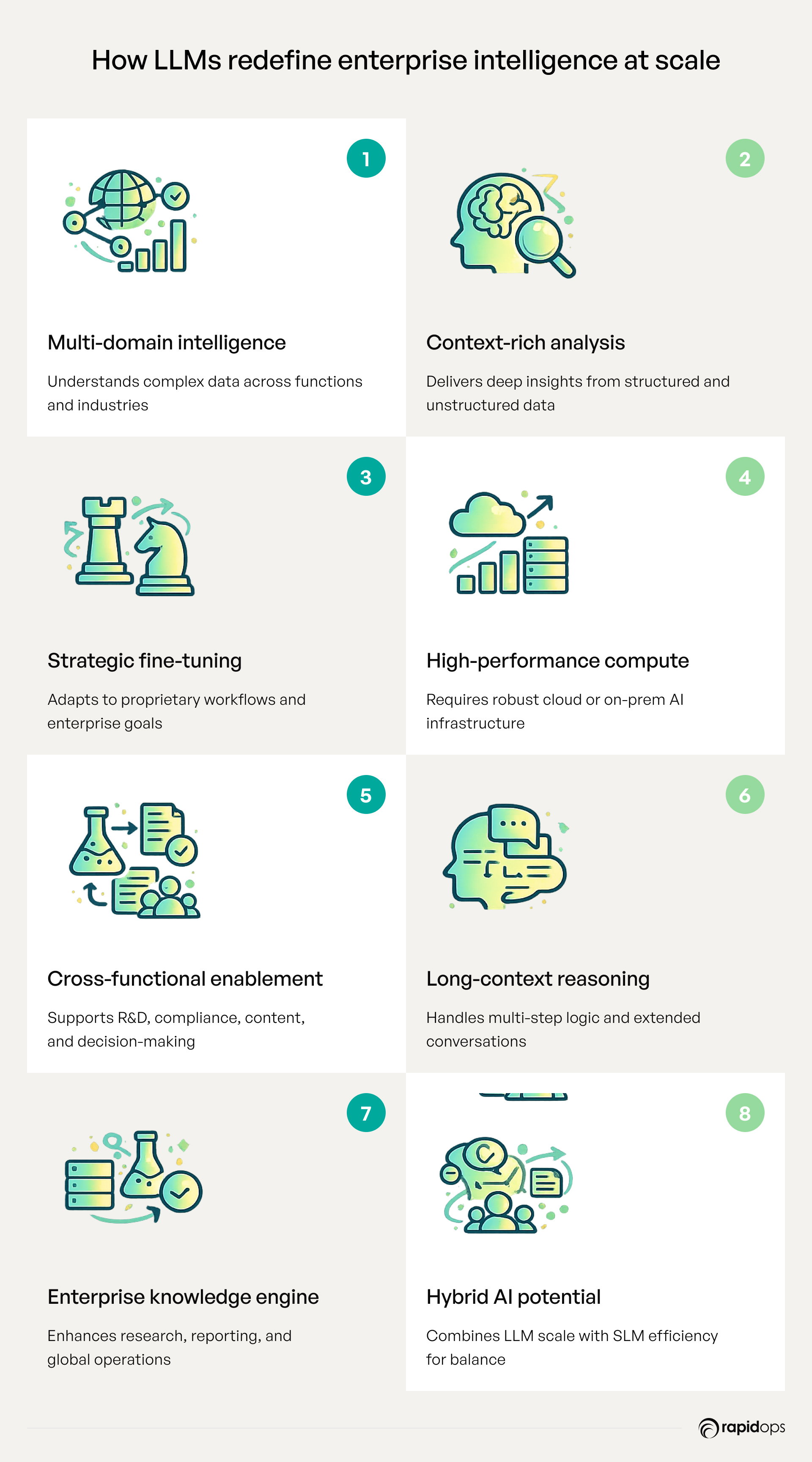

How LLMs unlock complex insights and enterprise-wide intelligence

Large language models (LLMs) are designed for enterprises seeking broad intelligence, multi-domain analysis, and strategic insights. With tens to hundreds of billions of parameters and training on vast, diverse datasets, LLMs offer generalized, scalable intelligence capable of performing complex tasks that span entire organizations.

Multi-domain comprehension and contextual understanding

LLMs provide deep contextual understanding across multiple domains, processing structured and unstructured data to deliver actionable insights. They support advanced analytics, market trend analysis, predictive modeling, and knowledge retrieval, enabling executives to make data-driven decisions at scale.

Advanced fine-tuning and enterprise adaptation

While general-purpose by design, LLMs can be fine-tuned on domain-specific datasets to enhance performance for proprietary workflows, such as legal document analysis, sales and marketing strategies, or enterprise content generation. This combination of broad knowledge and targeted adaptation ensures strategic alignment across business units.

Computational demands and infrastructure

LLMs require significant computing resources, often involving multiple parallel processing units like GPUs or TPUs. Their large model parameters and memory footprint necessitate cloud-hosted or high-performance on-premises deployment, providing the infrastructure for complex reasoning and multi-domain content generation.

Enterprise-wide operational value

LLMs excel in cross-functional workflows, supporting knowledge management, AI-driven decision support, automated content generation, and long-context reasoning. They are particularly suited for R&D, legal compliance, corporate intelligence, and global operations, where multi-turn interactions and broad language comprehension are critical.

Hybrid potential with SLMs

Enterprises can combine SLMs for domain-specific, low-latency tasks with LLMs for enterprise-scale intelligence, achieving a balance of efficiency, responsiveness, and strategic insights. This hybrid approach enables organizations to maximize AI value across both operational and analytical domains.

Understanding the limits of SLMs and LLMs

As enterprises integrate AI into strategic operations, understanding the limitations of Small Language Models (SLMs) and Large Language Models (LLMs) is critical. Recognizing these constraints allows organizations to deploy the right model for the right task, optimize costs, and ensure operational efficiency without compromising performance or compliance.

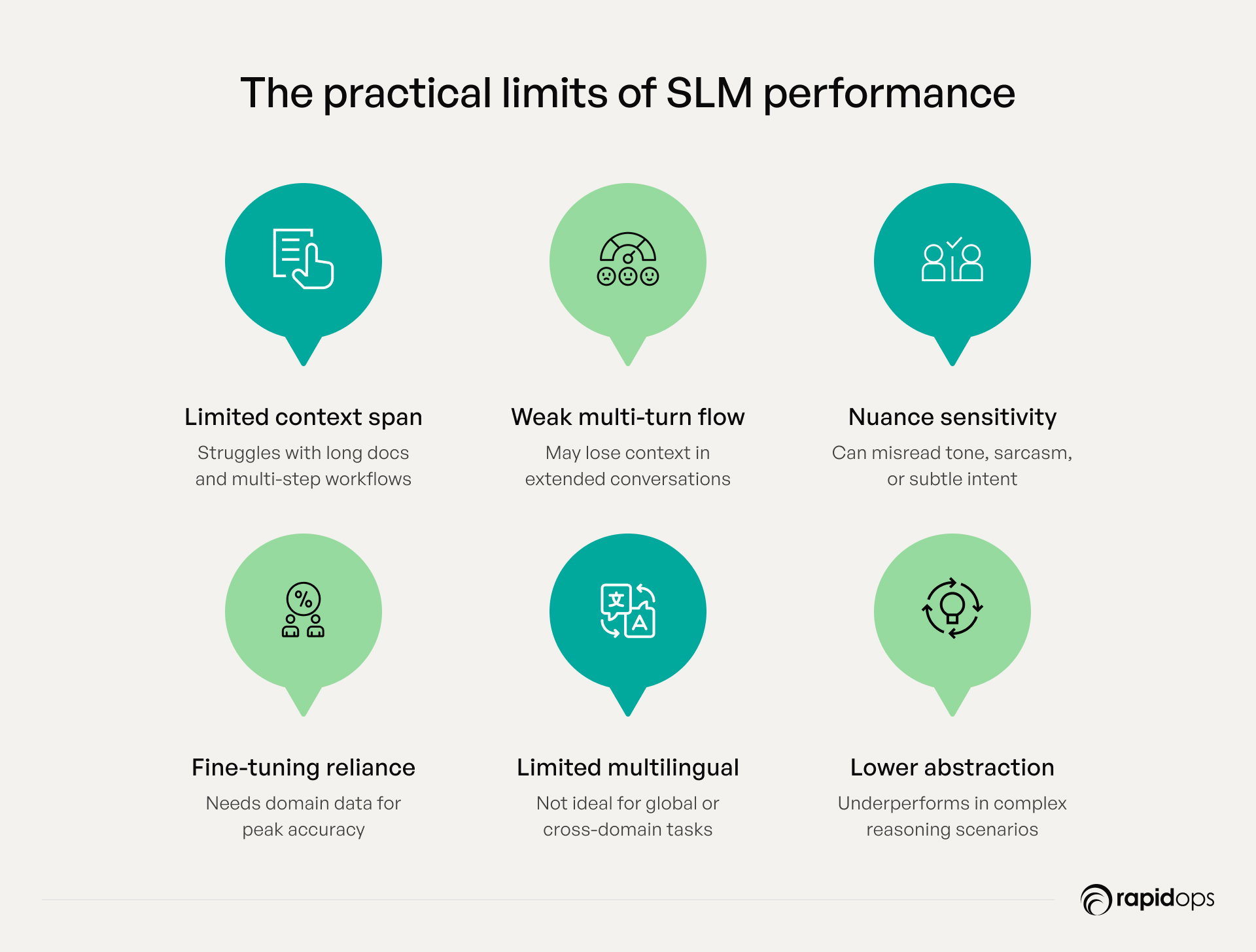

Limitations of small language models (SLMs)

SLMs are highly efficient, low-latency models designed for domain-specific tasks, but they come with inherent constraints that leaders must consider.

1. Narrower context windows

SLMs typically operate with a few billion parameters and shorter attention spans, limiting their ability to reason across long documents, multi-step workflows, or chained user interactions. For example, an SLM can summarize individual reports but may struggle to synthesize insights across multiple departments.

2. Challenges with multi-turn interactions

While ideal for single-task automation, such as virtual assistants or sentiment analysis, SLMs may lose context in extended conversations, affecting coherence in customer support or multi-step query handling.

3. Sensitivity to ambiguity and nuance

SLMs excel in focused domains but can misinterpret sarcasm, idioms, or subtle intent variations. High-quality prompts and domain-specific training data are essential to maintain reliability in real-world scenarios.

4. Dependency on fine-tuning

These models require domain-specific datasets and careful fine-tuning for peak performance. Without it, accuracy and generalizability can drop, especially in edge-case workflows such as legal document summarization or healthcare diagnostics.

5. Limited multilingual and cross-domain capability

Optimized for targeted tasks, SLMs are less effective for translation, cross-domain insights, or multi-lingual customer engagement, making them unsuitable for global enterprise applications without additional customization.

6. Constraints in abstraction and dynamic reasoning

SLMs are task-focused and may underperform in abstract reasoning, predictive modeling, or evolving decision-making scenarios that require high-level generalization across business units.

Limitations of large language models (LLMs)

LLMs provide generalized, scalable intelligence across multiple domains, but understanding their operational constraints ensures practical deployment.

1. Higher latency and inference overhead

With tens to hundreds of billions of parameters, LLMs require GPU/TPU-intensive infrastructure, which can slow real-time responses in edge deployments or operational systems demanding immediate action.

2. Operational and infrastructure costs

Hosting, fine-tuning, and maintaining LLMs is resource-intensive. Enterprises must account for substantial cloud infrastructure, energy usage, and compute costs, making them best suited for high-impact, enterprise-wide applications.

3. Risks with sensitive data handling

Cloud-hosted LLMs raise considerations around data residency, privacy, and regulatory compliance, especially in healthcare, finance, and defense sectors.

4. Scalability bottlenecks

Uniform deployment across departments or locations can be complex and expensive. LLMs excel at knowledge retrieval, cross-domain analytics, and strategic insight, but may not suit all enterprise workflows equally.

5. Inefficiency in offline and edge environments

Due to their size and computing needs, LLMs are impractical for offline operations or environments with limited connectivity, such as field operations, last-mile logistics, or real-time industrial monitoring.

6. Explainability and controllability gaps

LLMs function as opaque systems, which can make auditing, enforcing constraints, and tracing model behavior challenging—an important consideration in regulated industries.

7. Knowledge staleness

Without continuous retraining or integration with real-time data, LLMs risk providing outdated information, which can impact fast-evolving domains like market trend analysis, R&D, or compliance monitoring.

SLM vs LLM: Choosing the right model for the right task

In enterprise AI strategy, selecting between Small Language Models (SLMs) and Large Language Models (LLMs) is not just a technical choice; it’s a strategic business decision. Each model type delivers unique operational advantages, constraints, and enterprise value. Understanding these differences enables organizations to align AI capabilities with task complexity, deployment environment, cost efficiency, and domain-specific needs.

When to use SLMs

Small Language Models (SLMs) excel when speed, cost-efficiency, domain-specific accuracy, and operational agility are critical. Their compact architecture, fewer parameters, and ability to run on edge devices or on-premises systems make them the ideal choice for enterprises needing lightweight yet highly precise AI solutions.

Edge-centric operations and real-time performance

SLMs thrive in environments where low-latency inference is non-negotiable. From factory floors and logistics hubs to retail checkouts, SLMs deliver rapid responses without relying on cloud connectivity. Their smaller memory footprint and fewer parameters enable fast inference, allowing concurrent users to access AI insights instantly, even in resource-constrained environments.

Data privacy, compliance, and secure domain control

Enterprises operating in regulated industries such as healthcare, legal, and finance benefit from SLMs’ ability to process sensitive data entirely on-device or on-premises. This minimizes data movement, ensures privacy and residency compliance, and supports auditability. Organizations can maintain strict governance while leveraging domain-specific datasets to ensure outputs remain accurate and relevant.

Cost efficiency and rapid fine-tuning

SLMs are economical to train and deploy due to lower computational needs, fewer model parameters, and smaller datasets. Fine-tuning SLMs for specific workflows or tasks—such as sentiment analysis, document summarization, or automated form processing, is faster and less resource-intensive compared to LLMs. This allows enterprises to quickly adapt AI for domain-specific operational requirements without incurring high infrastructure costs.

Offline readiness and resource-constrained environments

In remote locations or environments with intermittent connectivity, SLMs ensure continuous AI capabilities. They can operate on consumer-grade hardware, edge servers, or microcontrollers, enabling organizations to maintain productivity and intelligence without cloud dependency.

Targeted domain-specific expertise

SLMs excel in narrow, domain-focused applications. By training on proprietary or curated datasets, they can match or even surpass LLMs in specialized tasks like clinical diagnosis, industry-specific analytics, and regulatory reporting. Their ability to deliver precise results in specific domains makes them indispensable for operational efficiency and high-value task execution.

When to use LLMs

Large Language Models (LLMs) are best suited for scenarios requiring multi-domain intelligence, deep contextual understanding, and enterprise-scale reasoning. With billions to trillions of parameters and the ability to process vast, diverse datasets, LLMs provide enterprises with generalized, scalable intelligence across departments and functions.

Complex reasoning and multi-turn tasks

LLMs excel at handling long-context conversations, chained queries, and open-ended reasoning. They can synthesize incomplete or ambiguous inputs into actionable insights, making them ideal for strategic decision support, AI copilots, and cross-functional workflows. Their capability to generate human language and interpret complex relationships enables enterprises to tackle tasks that demand nuanced understanding and creative problem-solving.

Enterprise-wide intelligence and cross-domain insights

Trained on broad and proprietary datasets, LLMs break down silos by providing cohesive knowledge across HR, finance, operations, marketing, and R&D. They support advanced semantic search, retrieval-augmented generation, and knowledge extraction, enabling enterprises to make informed decisions faster and with higher accuracy.

Multilingual and global capabilities

LLMs empower global enterprises by handling multiple languages, translations, and cultural nuances, enabling seamless collaboration across geographies. They can localize content, maintain consistent brand tone, and support global customer engagement.

Advanced content generation and analytics

LLMs generate dynamic content across reports, marketing materials, technical documentation, and more. They support probabilistic machine learning, market trend analysis, and sophisticated data-driven insights, offering enterprises high-value outputs that inform strategic initiatives and innovation programs.

Infrastructure and computational considerations

Deploying LLMs requires significant computational resources, including GPUs or TPUs, and robust cloud or on-prem infrastructure. While resource-intensive, their scalability and adaptability allow enterprises to manage large-scale AI deployments and multi-domain applications, making them a cornerstone for enterprise intelligence and innovation.

Hybrid orchestration for maximum impact

The most effective enterprise AI strategies combine SLMs and LLMs. SLMs handle low-latency, domain-specific tasks, while LLMs focus on complex reasoning, multi-domain insights, and enterprise-scale intelligence. This hybrid approach ensures cost efficiency, operational precision, and maximum AI value across the organization.

Making the right model choice for operational success

Now that you understand the fundamental differences between Small Language Models and Large Language Models, the real decision isn’t about size, it’s about strategic fit. The best model for your enterprise is the one that aligns with your unique goals, data infrastructure, and operational realities.

LLMs excel at deep reasoning, generating broad content, and handling complex, multi-domain tasks. Meanwhile, SLMs deliver speed, efficiency, enhanced privacy, and on-device performance, making them ideal for focused, sensitive, or latency-critical applications. Success comes from deploying the right model, at the right place, for the right purpose.

At Rapidops, we help you pinpoint where AI delivers the greatest business advantage before writing a single line of code. We assess your AI maturity, identify whether an SLM, LLM, or hybrid approach best suits your business, and then build, fine-tune, and deploy solutions designed to create a measurable impact. Whether your priority is streamlining operations, empowering smarter decisions, or transforming customer experiences, our team is here to ensure your AI investments deliver real, scalable value.

Not sure which language model is right for you?

Book a free strategy session with our experts. We’ll understand your goals, assess your business processes, recommend the best-fit model, and help you turn the plan into a working solution that delivers real impact.

Frequently Asked Questions

Why are small language models becoming important in 2026?

Small language models (SLMs) are gaining momentum in 2026 due to their high utility and lower computational overhead. As businesses prioritize AI cost efficiency, control, and compliance, SLMs enable on-device intelligence, faster inference, and easier fine-tuning for domain-specific tasks, especially where large-scale cloud inference isn’t viable or necessary.

Do SLMs use transformer architecture like LLMs?

Yes, most small language models are based on the same transformer architecture as LLMs. The key difference lies in the number of parameters, depth of layers, and computational requirements. SLMs retain core architectural strengths such as attention mechanisms and contextual embeddings while operating within a lighter, faster footprint.

Are small language models easier to train and deploy than LLMs?

Absolutely. SLMs are easier to train, fine-tune, and deploy due to their smaller size and lower infrastructure demands. Enterprises can host them on local servers, edge devices, or secure environments, enabling faster iteration cycles, cost-effective experimentation, and better control over model behavior and data governance.

How do SLMs support multilingual or code-mixed environments?

Modern SLMs can be trained or fine-tuned to handle multilingual or code-mixed input effectively. While they may not match LLMs in language breadth, focused fine-tuning on regional or industry-specific datasets allows SLMs to excel in specialized multilingual contexts like localized customer support or field operations across diverse regions.

What is the future of small language models in enterprise AI?

SLMs will play a critical role in the enterprise AI stack, especially in edge AI, embedded systems, and private environments. Their ability to deliver fast, task-specific intelligence at lower cost and risk makes them ideal for decentralized workflows, personalized interfaces, and domain-aware co-pilots, complementing LLMs rather than replacing them.

Can SLMs be integrated into existing enterprise software stacks?

Yes. SLMs can be seamlessly embedded into CRMs, ERPs, and analytics platforms using lightweight APIs or containerized services. This allows enterprises to enhance internal tools with context-aware automation, like suggesting product insights in sales tools or extracting insights from structured reports in finance systems without relying on external cloud LLMs.

Can I use an SLM for real-time decision-making at the edge?

Yes, SLMs are ideal for edge environments where real-time processing, low latency, and data privacy are critical. For instance, in manufacturing, logistics, or IoT setups, SLMs can enable intelligent decision-making directly on devices without sending data to the cloud, ensuring speed, resilience, and compliance with regulatory constraints.

Rahul Chaudhary

Content Writer

With 5 years of experience in AI, software, and digital transformation, I’m passionate about making complex concepts easy to understand and apply. I create content that speaks to business leaders, offering practical, data-driven solutions that help you tackle real challenges and make informed decisions that drive growth.

What’s Inside

- What are SLMs and LLMs

- Architectural and design differences

- SLMs vs. LLMs: Key differences shaping enterprise adoption

- How SLMs drive fast, cost-efficient, and domain-focused AI for your business

- How LLMs unlock complex insights and enterprise-wide intelligence

- Understanding the limits of SLMs and LLMs

- SLM vs LLM: Choosing the right model for the right task

- Making the right model choice for operational success

Let’s build the next big thing!

Share your ideas and vision with us to explore your digital opportunities

Similar Stories

- AI

- 4 Mins

- September 2022

- AI

- 9 Mins

- January 2023

Receive articles like this in your mailbox

Sign up to get weekly insights & inspiration in your inbox.