AI (generative AI) has undeniably transformed the world, unlocking unparalleled capabilities for businesses and industries alike. From enhancing customer experience to streamlining operations, AI technologies like large language models (LLMs) are redefining the competitive landscape.

But with great power comes great responsibility. The increasing reliance on extensive data, which often includes sensitive personal information, has raised numerous concerns about data privacy and the potential misuse of AI.

In this comprehensive guide, we’ll delve deep into the world of AI data privacy issues, aiming to arm businesses with the knowledge to navigate this complex domain. We start by exploring the relationship between AI and data privacy, shedding light on why and how personal data protection is of paramount importance in the context of AI applications.

Next, we examine the various AI and privacy laws implemented by jurisdictions worldwide, which lay the groundwork for protecting individuals' privacy rights and promoting transparency in the use of AI. We’ll also discuss the principles of responsible AI and how adhering to these guidelines ensures the ethical and respectful use of AI technologies.

Understanding AI data privacy challenges

Like any technology you might use, Generative AI, a system that heavily leans on data, harbors the potential to be misused - it can be utilized to fabricate fake profiles or manipulate images.

In the era of AI, ignorance is not an option. For businesses to leverage AI's full potential without infringing on privacy rights, they must recognize and understand the associated risks.

As you navigate your role as a business owner or professional, it's crucial that you value privacy. Take note that most generative AI systems, ChatGPT included, cannot provide a full-proof guarantee of data privacy.

This fact escalates in significance if your business is under a contractual obligation to safeguard privacy or maintain confidentiality for your clients. When you feed client, customer, or partner information into a chatbot, you might be taken aback by the AI's unpredictable use of that information.

What is AI (artificial intelligence) and its importance for businesses?

In the business world, "AI" is a wide umbrella term that covers a network of interconnected techniques and technologies that are key to your organization's success. These technologies range from machine learning and predictive analytics to natural language processing and robotics.

By embracing these technological leaps, you, as a business owner, can unlock valuable insights, improve your decision-making processes, enhance customer experiences, and streamline your operations.

To effectively leverage these powerful tools and secure a competitive edge in today's rapidly evolving digital landscape, you must thoroughly understand the scope of AI and its many components. Hence, your journey into the realm of AI isn't just advisable; it's essential.

Artificial intelligence (AI) and privacy laws

Artificial Intelligence (AI) systems, with their steadily growing sophistication, are amassing and processing vast volumes of personal data. This can raise troubling privacy concerns for you as an individual, as you may remain in the dark about how your data is used or who can access it.

In response to these burgeoning concerns, governments across the globe have implemented laws that govern AI's use and the collection and processing of personal data. Although these laws vary from one country to the next, they all converge on several shared objectives:

- To protect individuals' privacy rights.

- To promote transparency and accountability in the use of AI.

- To foster innovation in AI technologies.

In recent years, a significant focus has been on establishing comprehensive AI governance frameworks prioritizing trustworthiness.

Public organizations and industry alliances have created these guidelines, emphasizing shared principles pivotal for responsibly implementing AI within business operations.

Here is a brief overview of some of the key AI and privacy laws in place in different jurisdictions:

1. European Union (E.U.)

The General Data Protection Regulation (GDPR) is the world's most comprehensive AI and privacy law. It applies to all organizations that process the personal data of individuals located in the EU, regardless of where the organization is located.

The GDPR gives individuals strong rights over their data, including accessing, correcting, deleting, and porting it. It also requires organizations to be transparent about how they collect and use personal data and to take steps to protect the privacy of that data.

2. United States (U.S.)

There is no federal AI and privacy law in the US, but several state laws address different aspects of AI and privacy.

For example, the California Consumer Privacy Act (CCPA) gives consumers the right to know what personal data is being collected about them, how it is being used, and who it is being shared with. The CCPA also allows consumers to request that their data be deleted.

3. Brazil

Brazil's Lei Geral de Proteção de Dados (LGPD) is a comprehensive AI and privacy law that took effect in August 2020. The LGPD gives individuals strong rights over their data and requires organizations to be transparent about collecting and using personal data. The LGPD also prohibits discrimination based on personal data.

4. South Africa

South Africa's Protection of Personal Information Act (POPIA) is a comprehensive AI and privacy law that took effect in July 2021. The POPIA gives individuals strong rights over their data and requires organizations to be transparent about collecting and using personal data. The POPIA also prohibits discrimination based on personal data.

These are just a few examples of the AI and privacy laws that are in place around the world. As AI technology develops, more laws will likely be enacted to address the privacy challenges A. I. poses.

Key areas covered by these AI privacy laws

The emergence of AI technology has necessitated the development of privacy laws to protect individuals' rights and ensure the responsible use of personal data.

These laws address several key areas to safeguard privacy, promote transparency, and uphold ethical standards. The following areas are commonly covered by AI privacy laws:

1. Privacy and data governance

Organizations must establish clear policies and procedures for collecting, using, and storing personal data. They must prioritize privacy by implementing encryption and anonymization techniques to protect sensitive information.

2. Accountability and auditability

Organizations must be able to demonstrate accountability for their data practices. They should maintain records of how personal data is collected, used, and stored and have processes to conduct regular audits to ensure compliance with privacy laws and regulations.

3. Robustness and security

To safeguard personal data, organizations must employ robust security measures. This includes protecting data from unauthorized access, use, or disclosure. Regular testing and updating security systems are essential to avoid evolving threats and maintain data integrity.

4. Transparency and explainability

Organizations are expected to be transparent about their data practices. This entails providing individuals with clear information on how their data is collected, used, and stored. Additionally, organizations must be able to explain their AI systems' functionality and decision-making processes.

5. Fairness and non-discrimination

AI systems should be designed and implemented to prevent discrimination against individuals based on protected characteristics, such as race, gender, or religion. Organizations must ensure their AI systems do not perpetuate biases or result in unfair treatment.

6. Human oversight

Maintaining human oversight over AI systems is crucial for responsible and ethical use. Organizations should have mechanisms to ensure that humans are involved in the decision-making process, overseeing the operation of AI systems and intervening when necessary to prevent harmful or unethical outcomes.

7. Promotion of human values

AI should be leveraged to align with human values, such as fairness, equality, and respect for privacy. Organizations are encouraged to prioritize these values in developing, deploying, and utilizing AI systems, ensuring they contribute positively to society.

By addressing these key areas, AI privacy laws aim to create a framework that protects individuals' privacy rights, promotes responsible data practices, and fosters trust between organizations and the public.

Compliance with these laws ensures that AI technologies are used ethically and in a manner that respects individuals' fundamental rights and values.

Role of responsible AI amidst the data privacy chaos

Industry players have also taken proactive steps towards responsible AI adoption through self-regulatory initiatives.

Collaborations between businesses, academia, and non-profit organizations, such as the Partnership on AI and the Global Partnership for AI, aim to drive ethical AI practices forward. Standardization bodies like ISO/IEC, IEEE, and NIST have also provided valuable guidance in this domain.

While existing governance initiatives are primarily in the form of non-binding declarations, it's important to note that various privacy laws already regulate the responsible use of AI systems to a significant extent. Privacy regulators have played a prominent role in shaping AI governance efforts.

For example, Singapore's Personal Data Protection Commission released the Model AI Governance Framework, the UK Information Commissioner's Office has extensively worked on developing an AI Auditing Framework, and the Office of The Privacy Commissioner for Personal Data of Hong Kong has issued Guidance on the Ethical Development and Use of AI.

Notable examples of responsible AI frameworks include:

- UNESCO's Recommendation on the Ethics of AI.

- China's ethical guidelines for AI usage

- The Council of Europe's Report "Towards Regulation of AI Systems"

- The OECD AI Principles

- The Ethics Guidelines for Trustworthy AI by the European Commission's High-Level Expert Group on AI.

Principles of responsible AI

AI's responsible and ethical use is a guiding light in our rapidly evolving tech-centric world. As you strive to create AI systems in your business that honor user privacy, engender trust, and uphold ethical standards, the following guiding principles come to the forefront:

1. Transparency and explainability

Transparency is key to building trust in AI systems. By providing clear explanations of AI algorithms and decision-making processes, businesses can empower individuals to understand how their data is utilized.

Transparent AI promotes accountability, enabling users to assess the fairness and potential biases within AI systems.

2. Data minimization and anonymization

Adopting practices that minimize data collection is crucial to mitigate privacy risks and safeguard sensitive information.

Businesses can reduce the chances of data breaches and unauthorized identification by only collecting necessary data and employing anonymization techniques. This protects individuals' privacy while allowing AI to operate effectively.

3. Consent and user control

Respecting user autonomy and privacy rights is paramount in responsible AI practices. Individuals should have control over their data and be given clear consent mechanisms to make informed decisions about its usage.

Empowering users to choose how their data is collected, processed, and shared enhances trust and ensures ethical data handling.

4. Ethical data governance

Comprehensive data governance frameworks play a vital role in responsible AI implementation. These frameworks prioritize privacy and ethical considerations throughout the AI lifecycle, from data acquisition to model training and deployment.

By establishing robust governance mechanisms, businesses can ensure that AI systems are designed and operated in a manner that respects fundamental rights and values.

As businesses adopt AI technologies, it is crucial to familiarize themselves with these governance developments and align their AI strategies with the principles outlined in these frameworks. Prioritizing responsible AI practices ensures compliance with privacy regulations and builds trust among customers, stakeholders, and society as a whole.

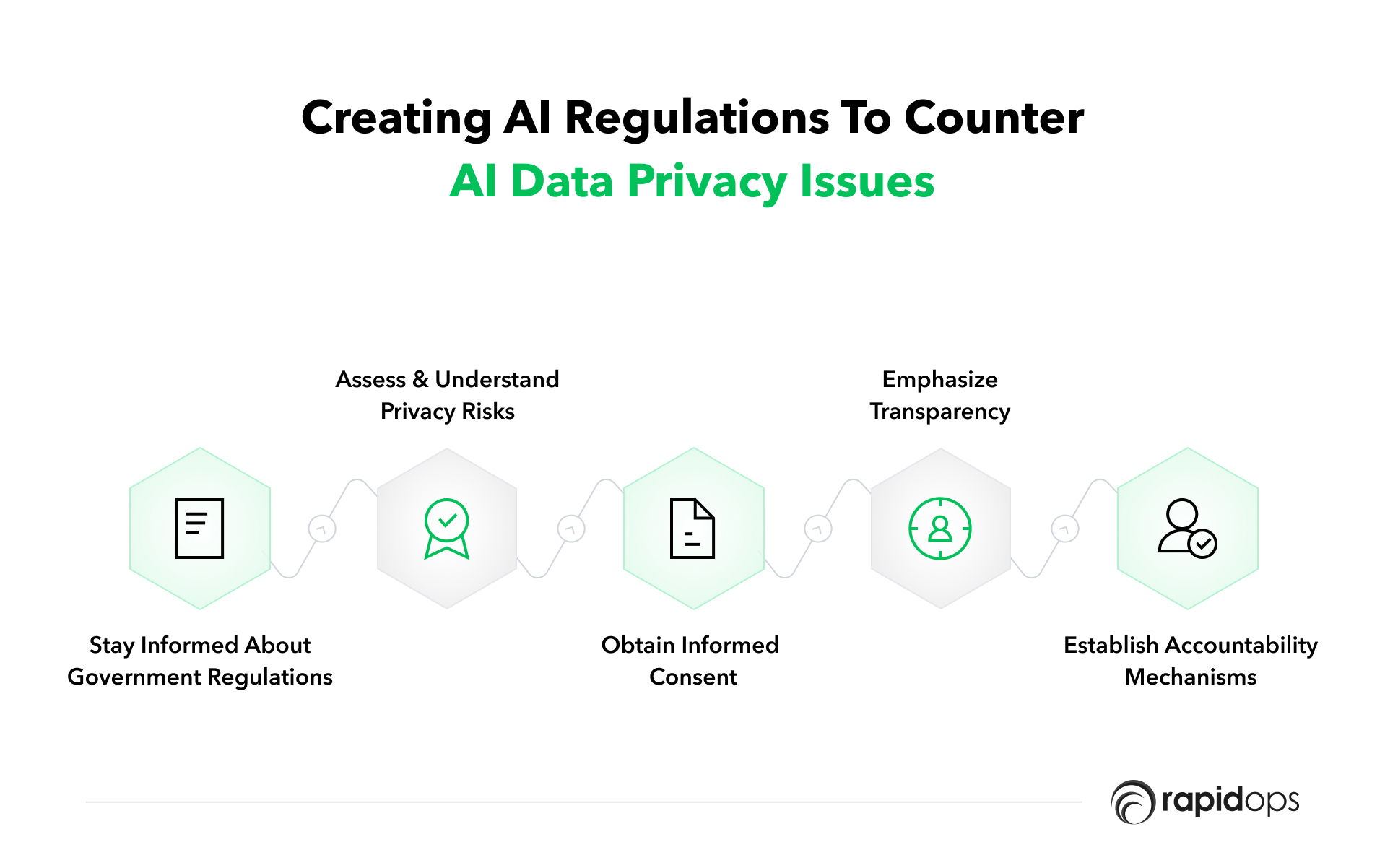

Dealing with the AI data privacy issues with AI regulations

When it comes to AI data privacy, businesses must navigate the landscape of regulations and best practices to ensure AI technology's responsible and ethical use. Here are some key considerations for businesses in dealing with data privacy issues about AI:

1. Stay informed about government regulations

As AI technology evolves, governments will likely introduce new regulations to govern its use. Businesses must stay updated on these regulatory changes and adapt their practices accordingly.

By staying informed, businesses can ensure compliance and mitigate potential legal risks.

2. Assess and understand privacy risks

AI systems can introduce privacy risks, including bias, discrimination, and data breaches. Businesses need to conduct thorough assessments of these risks and implement appropriate safeguards.

By understanding the potential risks, businesses can proactively address them and protect individuals' privacy rights.

3. Obtain informed consent

When consent is required, businesses should seek explicit and informed consent from individuals before using their data in AI systems.

Consent should be specific to the intended use of data, ensuring individuals understand how their data will be utilized. By obtaining proper consent, businesses establish a foundation of trust and respect for individuals' privacy preferences.

4. Emphasize transparency

Transparency is vital in maintaining trust between businesses and individuals. Companies should adopt practices that promote transparency in how they employ AI systems and handle personal data.

Clear and concise explanations should be provided to individuals, outlining the purposes for which their data is collected, used, and shared. By being transparent, businesses foster an environment of openness and accountability.

5. Establish accountability mechanisms

Businesses should implement robust accountability processes to ensure the responsible and ethical use of AI systems. This includes monitoring and auditing AI systems to verify compliance with privacy policies and regulations.

By having clear processes in place, businesses can proactively address any privacy concerns that may arise and take corrective actions to protect individuals' data.

Partner with Rapidops for ethical and profitable ai implementation

Living in a digital age where data has become the lifeblood of progress, the potential of AI has become clearer than ever. But it's not just about leveraging these tools; it's about doing so in a manner that respects individual privacy and upholds transparency. This has become the new frontier in business - a frontier where success is about striking the right balance between technological advancement and ethical considerations.

We step deeper into uncharted territories with every new application and platform. It's not always easy for businesses to navigate these terrains on their own. That's where Rapidops comes in.

We firmly believe in creating a balance between ethics and profitability - a world where regulations are respected, and AI is used for the common good. We are committed to assisting businesses in implementing AI ethically, safeguarding individual privacy, and promoting societal and business betterment.

Do you have an AI concept that needs ethical, secure, and profitable execution? Turn to Rapidops. Connect with our team of experts today for a discovery call and kick-start your AI project on the right footing. At Rapidops, we don't just build AI solutions; we build trust.

Frequently asked questions (FAQs)

The following section strives to answer some of the most frequently asked questions on AI data privacy. From addressing the steps to protect data privacy to the prominent issues associated with AI, we aim to clarify your concerns and deliver a thorough understanding of the topic.

1. How to protect data privacy in AI?

1. How to protect data privacy in AI?2. What are the data privacy issues with AI?

2. What are the data privacy issues with AI?3. How can data privacy issues be reduced?

3. How can data privacy issues be reduced?4. How can we reduce the risk of AI?

4. How can we reduce the risk of AI?5. Why data privacy is important for AI?

5. Why data privacy is important for AI?6. What are the AI risk issues?

6. What are the AI risk issues?7. What is the most important problem for AI safety?

7. What is the most important problem for AI safety?8. How do you handle data privacy?

8. How do you handle data privacy?

Saptarshi Das

Content Editor

9+ years of expertise in content marketing, SEO, and SERP research. Creates informative, engaging content to achieve marketing goals. Empathetic approach and deep understanding of target audience needs. Expert in SEO optimization for maximum visibility. Your ideal content marketing strategist.

What’s Inside

- Understanding AI data privacy challenges

- What is AI (artificial intelligence) and its importance for businesses?

- Artificial intelligence (AI) and privacy laws

- Key areas covered by these AI privacy laws

- Role of responsible AI amidst the data privacy chaos

- Principles of responsible AI

- Dealing with the AI data privacy issues with AI regulations

- Partner with Rapidops for ethical and profitable ai implementation

- Frequently asked questions (FAQs)

Let’s build the next big thing!

Share your ideas and vision with us to explore your digital opportunities

Similar Stories

- AI

- 4 Mins

- September 2022

- AI

- 9 Mins

- January 2023

Receive articles like this in your mailbox

Sign up to get weekly insights & inspiration in your inbox.