Stable Diffusion from Stability AI: Open-Source Text-to-Image Generation

Stability AI has launched Stable Diffusion, a groundbreaking open-source image generator designed to transform text prompts into stunning images. With its lightweight architecture, Stable Diffusion delivers remarkable speed and quality. This article explores the features, usage instructions, model cards, and technical aspects of Stable Diffusion, highlighting its accessibility and potential applications.

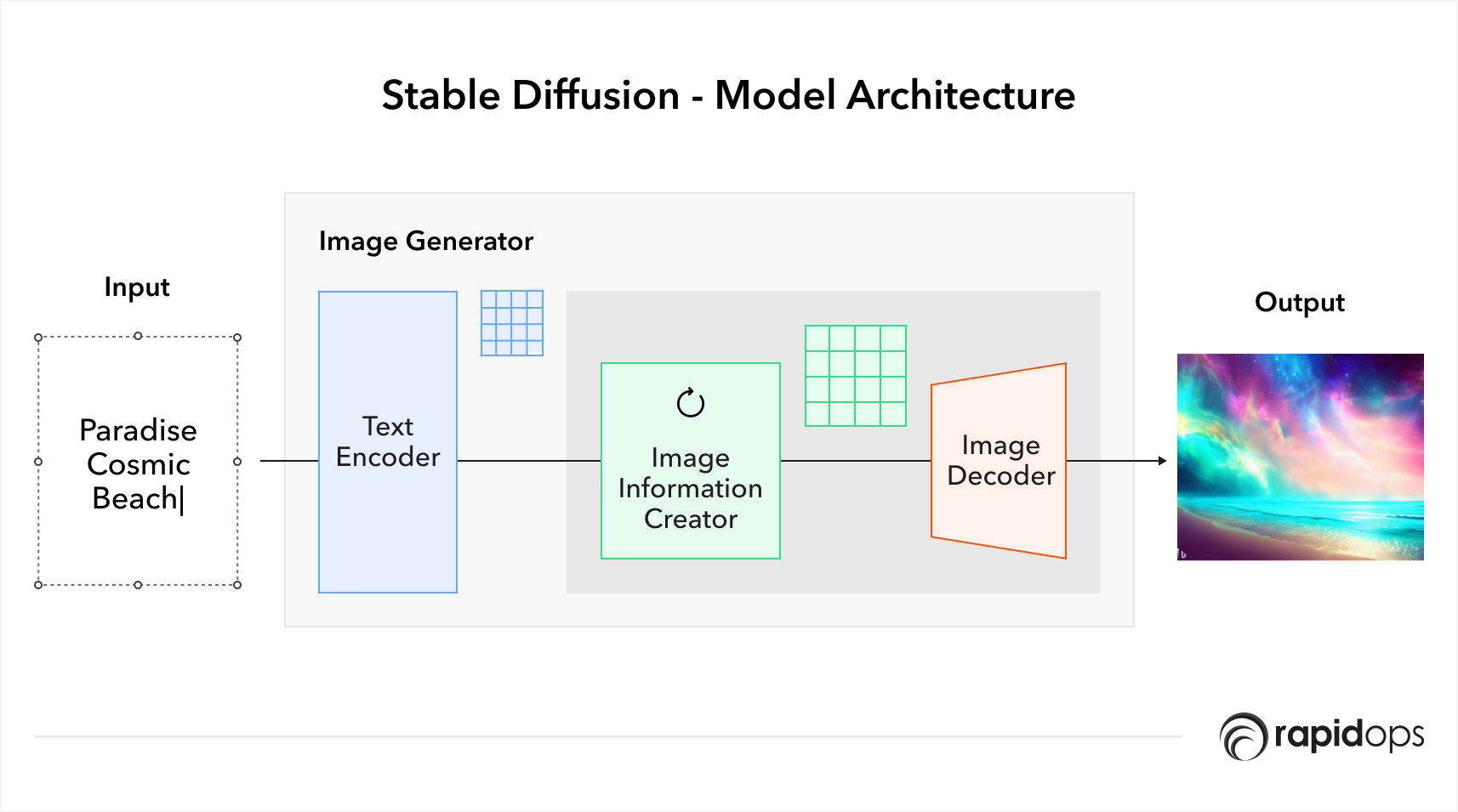

Technical Details

Stable Diffusion relies on two primary components: an encoder and a decoder. The encoder, a convolutional neural network (CNN), extracts essential image features through convolutional layers, generating a latent representation. The decoder, also a CNN, reconstructs images from the latent space using deconvolutional and upsampling layers. The Stable Diffusion model is trained using a generative adversarial network (GAN), enabling the encoder and decoder to compete and refine their respective tasks.

Capabilities

- Instant Visual Magic Stable Diffusion works like a wizard, quickly and precisely turning plain text into stunning images. With just a few words, witness the extraordinary power to bring imagination to life in vibrant and captivating visuals.

- Seamless Integration and Accessibility Designed to cater to a wide range of users, Stable Diffusion can be effortlessly installed and run on gaming PCs with a minimum of 6.9 GB VRAM and modern graphics cards like NVIDIA. While smartphones may require further advancements, the Stable Diffusion website provides a user-friendly alternative for immediate exploration.

Limitations

Despite its remarkable capabilities, Stable Diffusion does come with certain limitations that should be considered:

- Hardware Requirements To harness the power of Stable Diffusion, users need a gaming PC with a minimum of 6.9 GB VRAM and a modern graphics card like NVIDIA. Running Stable Diffusion on smartphones is not currently supported, necessitating further advancements for mobile accessibility.

- Language Dependency Stable Diffusion's performance is closely tied to the quality and specificity of the text prompts provided. Complex or ambiguous prompts may result in less accurate or satisfactory image-generation outcomes.

- Training Data Influence Stable Diffusion's effectiveness is influenced by the training data it has been exposed to. As a result, generating images that fall outside the scope of its training dataset may lead to suboptimal results or unexpected visual artifacts.

Use Cases:

- Media Production Stable Diffusion empowers creators in film, video games, and advertising by generating realistic and visually striking images, enhancing storytelling and immersive experiences.

- Personalization The model enables the generation of personalized images, such as avatars or social media profile pictures, allowing individuals to express their unique identity creatively.

- Scientific Research Stable Diffusion finds applications in scientific fields, including medical imaging and materials science, facilitating the generation of images for research, analysis, and visualization purposes.

Stable Diffusion represents a significant advancement in the field of text-to-image generation. With its ability to produce photo-realistic images, stability, and efficiency, the model opens new avenues for creativity and practical applications. Although challenges such as computational requirements and hyperparameter sensitivity exist, the transformative potential of Stable Diffusion in media production, personalization, and scientific research is undeniable. Stability AI's dedication to responsible AI practices ensures ongoing development, refinement, and the continued evolution of this groundbreaking technology.

Got questions? We’ve got answers!

Are Stable Diffusion images public?

Can I use Stable Diffusion for commercial use?

Is Stable Diffusion free to use?